Table of contents

1. You are not the user

2. ROI of UX: More than just numbers

3. Usability-Test vs. Expert Reviews

4. Study insights

5. Tips, to assist you in the evaluation process

6. Conclusion

1. You are not the user

In heated debates about deadlines and budgets, UX activities, such as usability-tests, are often the first to be discarded. “We’ll do it later!” or “We know our customers and understand what they want.” are common arguments. However, this mindset leads to a familiar pitfall: “You are not the user!”.

Source: jonaskamber.com

Neglecting UX might mean missing shifts in customer needs, which can lead to revenue losses or, ultimately, even the failure of a business. Today, Netflix and HBO are still thriving, whereas former competitors like Blockbuster or Hollywood Video have faded into obscurity.

I always try to explain the importance and value of UX to people, often using arguments that seemingly lacked strength or were not persuasive enough. For example, I would reiterate statements such as “Every dollar invested in UX yields a $100 profit”. Despite the validity of these cost-benefit arguments, some simply don’t seem to hear them, feeling that the benefits appear too marginal. These sceptics remain unimpressed, no matter how compelling the arguments presented might be. Ironically, the UX industry itself has a poor UX.

Another approach to explaining the value of UX is the one proposed by Aly and Sturm (2019), which emphasizes the risks of inaction regarding UX. Imagine what you’re missing out on if you don’t invest in UX. Do you want to become the next Hollywood Video? This method underscores the potential losses and negative consequences companies might face if they disregard the importance of UX. It highlights the necessity of making informed decisions and understanding the overt as well as hidden costs of inaction.

2. ROI of UX: More than just numbers

The discussion illustrates that ignoring user experience is risky, but how do we measure the actual value of UX initiatives? Calculating the costs is challenging – determining the benefits even more so. Therefore, making a statement about the return on investment (ROI), i.e., the comparison between invested costs and the resulting profit, is nearly impossible.

There are countless articles and books on the topic, even a dedicated book titled “Cost-Justifying Usability – An Update for the Internet Age“. However, it was published in 2005 and, ironically, has received no updates since.

But, how much does it cost to review UX? From my experience, expert reviews can be done in one to three days, but professional user tests can easily require five to ten days. Comprehensive qualitative interview studies take even longer. But it’s not just about the costs. Not everything significant in user experience can be quantified.

Calculating the benefits is even trickier. Although you can determine clear key performance indicators (KPIs), such as time savings from UX optimisations, and then express the profit in Euros. For instance: Thanks to a usability test, an application was improved, saving two employees 15 minutes each per day, amounting to around 10 hours a month. This results in savings of approximately 700.- per month. This way, you can weigh the effort against the return.

However, this can’t always be accurately predicted, and not all usability tests are created equal. Factors such as the selected test participants, the number of tasks set, the moderation or the prototype itself, and much more, influence the test findings.

That’s quite a lot! Should we just ask UX professionals? Read on to find out!

3. Usability-Test vs. Expert Reviews

Undoubtedly, usability tests involve significant effort – both in terms of time and finances. Therefore, many believe it’s much cheaper to ask individuals with UX expertise, who can then advise on what changes should be made to a product, website, etc.

In my master’s thesis, I compared three UX evaluation methods to answer the question of their respective costs and benefits. I selected the following methods:

- Moderated usability test with 22 participants (recruited with TestingTime)

- Automated usability test (using the Solid tool) with 22 participants

- Expert review (heuristic evaluation) with five experienced UX professionals

All three methods were used to evaluate the same prototype of an online application platform.

You might wonder why I chose 22 participants for the moderated usability test. The idea was to challenge the industry-standard rule of the “Magic Number 5” (“5 participants uncover 80% of the issues”) and to enable a clear comparison between the moderated and automated methods.

4. Study insights

During my investigation of various usability evaluation methods, I gained insights that I will detail below.

4.1 Most issues are identified with a moderated usability test

The moderated usability test revealed the highest number of issues. This was expected since, for instance, follow-up questions can be asked, allowing for additional insights (probing).

One could argue that 22 participants will identify more issues than five experts simply because there are more of them. However, my research showed that on average, five users together could identify more problems than five experts.

4.2 Good value for money with automated usability tests

Considering the overall costs of conducting the test (working hours, license fees for tools, etc.) in relation to the number of identified problems, the automated method had the lowest cost per identified issue, offering the best value for money.

4.3 Opinions of UX professionals vary widely

The expert review clearly showed that the opinions of professionals differed significantly and different issues were identified. This is problematic in practice since the identified issues largely depend on the individual conducting the evaluation. To make matters worse, in everyday practice, expert reviews are rarely conducted with more than one professional.

4.4 Common mistakes with UX professionals

Furthermore, even UX professionals can make errors, either by missing actual issues (miss) or predicting problems that either don’t manifest or are infrequent (false alarm). In the expert reviews from the study, only about a third of the issues that actually arose in the usability tests with participants were identified, and about half of the problems were completely overlooked.

This indicates that if companies rely solely on expert reviews, they risk wasting resources and potentially creating new issues that aren’t genuine problems. A silver lining? UX professionals performed better when it came to more serious issues.

Overall, it’s always crucial to choose the right method depending on the question at hand, with a focus on the real problems users face. It’s worthwhile to invest time in formulating a clear-cut question because the vaguer the question, the murkier the results will be.

5. Tips, to assist you in the evaluation process

Based on the data and insights from my study, here are four tips to support you during the evaluation processes:

Tip 1: Rely on usability tests

From my experience and research, moderated usability tests are an effective means to identify problems. If the budget is tight, an automated usability test is an affordable yet effective alternative. When financial resources are limited, considering an expert review is an option, but one should keep in mind the risk of missing issues and false alarms.

Tip 2: Question the Magic Number 5

Do not blindly follow the rule of thumb “Five users find 80% of the problems” (“Magic Number 5”), as it’s not entirely accurate.

Instead, ask yourself the following:

Impact: Do I want to identify usability problems that many of my users experience or those that are rarer?

Certainty: How much do I want to leave the results to chance? The 80-20 rule (Pareto Principle) provides good guidance, i.e., 80%. Think of it like the shell game, where you have to guess under which shell the ball is hidden. With higher certainty, you have better chances of choosing the right shell and not leaving it to luck.

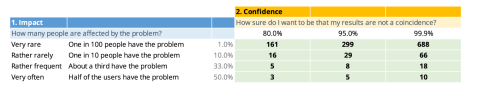

The following table helps you choose the number of test participants. Here’s how: If you want to identify usability problems affecting 1 out of 10 of your test participants (i.e., 10%) and be 80% certain of identifying all these issues, then you should select 16 people for your usability test.

Number of participants by impact and certainty (Sauro & Lewis, 2016)

Tip 3: Use the Four-Eyes Principle

To ensure usability tests are meaningful and offer an optimal cost-benefit ratio, I recommend having evaluations performed by multiple individuals. A quick and superficial evaluation increases the risk of overlooking crucial errors, thereby diminishing the validity of the tests. This also holds true for expert reviews.

Tip 4: Focus on the most serious problems

Especially in expert reviews, I advise concentrating primarily on the most severe problems. This enhances the likelihood of recognizing issues genuinely relevant to users and avoids unnecessary false alarms.

6. Conclusion

Time and budget can limit whether and how many people you include in a usability test. Remember, if you involve too few or no participants, you risk making incorrect decisions. Detecting and rectifying issues early on is much simpler and cheaper than making corrections later.

Making decisions under uncertainty is a norm in business operations. Ensure you’re not wasting resources based on incorrect assumptions. If you’re questioning the costs of usability tests, it might indicate doubts about their utility. Seek the right advice, and use tests not just for assessment but also for learning and building trust with stakeholders.

Always remember: Every step towards enhancing user experience is worthwhile. It’s better to act than to remain inactive. As Jakob Nielsen put it: “Just do it. The true choice is not between discount and deluxe usability engineering. The true choice is between doing something and doing nothing. Perfection is not an option. My choice is to do something!”

Or, as pop star Nicki Minaj said, “Do your f*** research.”